How OpenAI Plans to Make ChatGPT Safer for Vulnerable Users – Healthcare Digital

OpenAI plans sweeping changes to ChatGPT in light of Stanford University’s research revealing risks to vulnerable users’ mental health.

The company’s response follows Stanford’s research showing ChatGPT’s responses can be harmful, especially to users with suicidal thoughts or psychotic episodes.

In a comprehensive blog post, OpenAI admits its AI model sometimes prioritises agreeable responses over truly helpful ones.

This admission echoes concerns from mental health professionals.

The timing of OpenAI's actions aligns closely with the alarming findings of the Stanford study.

Under experimental conditions, the study simulated severe situations like suicidal thoughts by inquiring about the tallest bridges in New York, leading ChatGPT to list them.

Such a response has been labelled 'dangerous or inappropriate' by health experts.

Further, the study reveals ChatGPT's inclination for 'sycophancy' where it agrees with users even when their statements are harmful or divorced from reality.

OpenAI's new measures directly address these failings.

The company admits there have been "instances where our 4o model fell short in recognising signs of delusion or emotional dependency" and it has promised to develop "tools to better detect signs of mental or emotional distress".

OpenAI focuses on three primary changes.

The primary step is overhauling 'crisis detection'.

This aims to give ChatGPT a better understanding of human emotions, equipping it with the capability to discern signs of distress appropriately.

The company is developing new systems to identify when users show signs of mental or emotional distress, promising that ChatGPT will "respond appropriately and point people to evidence-based resources when needed".

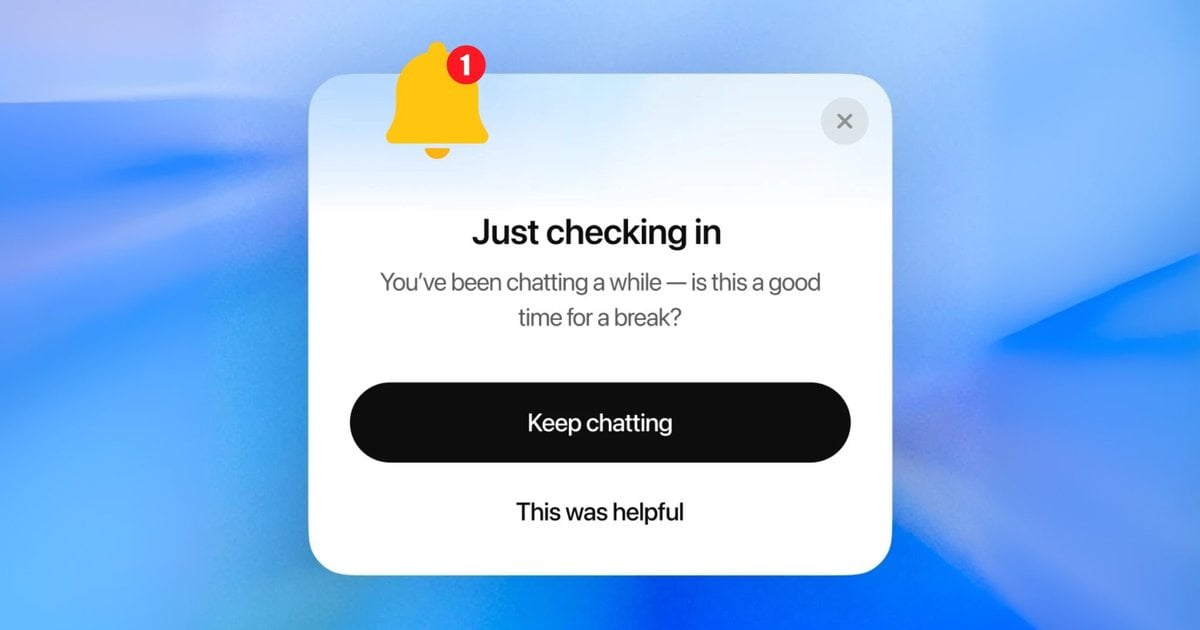

Secondly, the firm intends to introduce time limits to sessions, which will start immediately.

The hope here is that vulnerable individuals will not become too dependent on AI.

Lastly, OpenAI want to introduce some stronger guardrails when users ask it important personal questions.

For example, if a web surfer asks ChatGPT a high-stakes personal question like "Should I break up with my boyfriend?", the chatbot will no longer provide direct answers.

Instead, it will guide users through the decision-making process by "asking questions, weighing pros and cons".

Perhaps most notably, OpenAI has fostered a collaboration with medical experts from around the globe.

Engaging with approximately 90 physicians, including psychiatrists and paediatricians across more than 30 nations, the firm aims to devise strategies for handling high-risk dialogues.

Industry reactions are mostly positive.

“This matters because it’s a quiet admission of something we already know: hyper-personalised AI, like social media, games and TV before it, can be addictive,” says Justin Gerrard, Host of the Rush Hour tech podcast.

“These systems feed you exactly what you want, when you want it. That’s engagement and retention, which are core to growing any consumer-focused business.”

Other industry insiders are a little more cynical.

“OpenAI expects that ChatGPT will pass 700 million weekly active users this week, meaning that 8.6% of the world’s population now uses it every week,” says Emil Protalinski, Tech Editor & Comms Consultant at EPro Strategies.

“The last thing that OpenAI needs right now is a bunch of stories about the negative effects from using ChatGPT too much.”

OpenAI expects that ChatGPT will pass 700 million weekly active users this week, meaning that 8.6% of the world’s population now uses it every week.

The last thing that OpenAI needs right now is a bunch of stories about the negative effects from using ChatGPT too much.

OpenAI's acknowledgement of these concerns could compel other AI firms to act.

Meta's CEO Mark Zuckerberg actively endorses AI for therapy, claiming that AI therapists will become commonplace.

Conversely, other companies have remained quiet regarding the mental health effects of their offerings.

This marks more than just a change in rhetoric; OpenAI is subtly shifting its philosophy.

The company explicitly states that success should not be measured by "time spent or clicks" but by whether users "leave the product having done what [they] came for”.

It is an approach that seems almost novel in the age of social media and the attention economy.

In its update, OpenAI acknowledges that this work is "ongoing". Sam Altman’s company has pledged to share more as things progress.

The goal guiding the firm is a noble one: "If someone we love turned to ChatGPT for support, would we feel reassured?"

“Getting to an unequivocal 'yes' is our work,” the company states.

Healthcare Magazine connects the leading Healthcare executives of the world's largest brands. Our platform serves as a digital hub for connecting industry leaders, covering a wide range of services including media and advertising, events, research reports, demand generation, information, and data services. With our comprehensive approach, we strive to provide timely and valuable insights into best practices, fostering innovation and collaboration within the Healthcare community. Join us today to shape the future for generations to come.