Open Interpreter: An Interesting AI Tool to Locally Run ChatGPT-Like Code Interpreter – Beebom

After Auto-GPT and Code Interpreter API, a new open-source project is making waves in the AI community. The project is named Open Interpreter, and it’s been developed by Killian Lucas and a team of open-source contributors. It combines ChatGPT plugin functionalities, Code Interpreter, and something like Windows Copilot to make AI a ubiquitous solution on any platform. You can use Open Interpreter to do anything you can think of. You can interact with the system at the OS level, files, folders, programs, internet, basically everything right from a friendly Terminal interface. So if you are interested, learn how to set up and use Open Interpreter locally on your PC.

Things to Remember Before You Proceed

1. To take full advantage of Open Interpreter, you should have access to the GPT-4 API key. The GPT-3.5 model simply doesn’t cut it and throws multiple errors while running code. Yes, running GPT-4 API is expensive, but it opens a lot of new utilities on your system.

2. I would suggest not running the models locally unless you have a good understanding of the building process. The project is currently buggy, especially for local models on Windows (at least for us). Also, you’ll need to have beefy hardware specs to get better performance from larger models.

Set Up the Python Environment

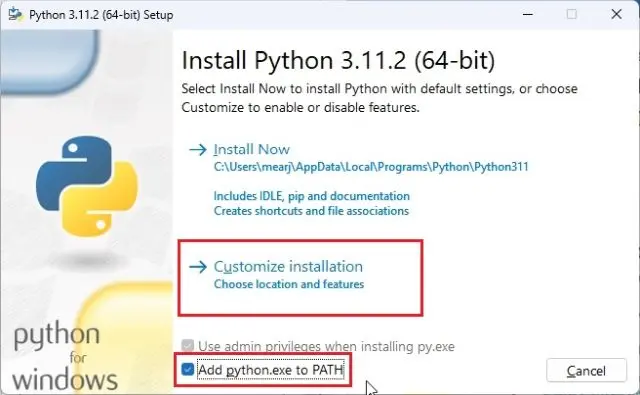

1. First off, you need to install Python and Pip on your PC, Mac, or Linux computer. Follow the linked guide for detailed instructions.

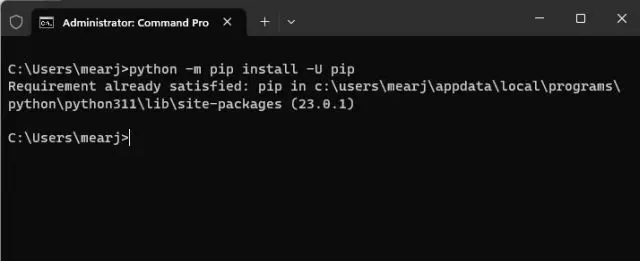

2. Next, open the Terminal or CMD and run the below command to update Pip to the latest version.

python -m pip install -U pip

3. Now, run this command to install Open Interpreter on your machine.

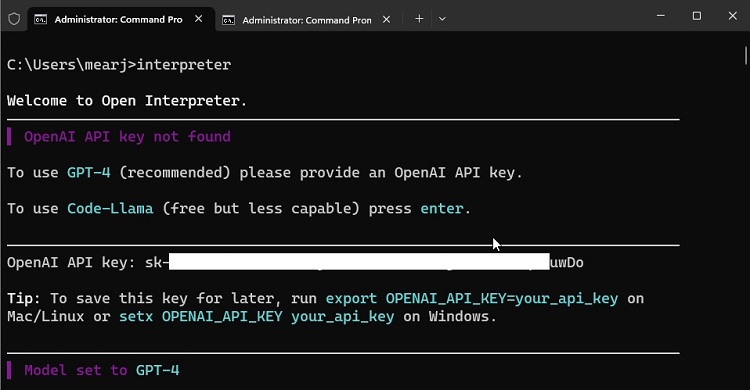

1. Once Open Interpreter is installed, execute one of the below commands based on your preference.

- For the GPT-4 model. Must have access to GPT-4 API from OpenAI.

interpreter- For the GPT-3.5 model. Available to free users.

interpreter --fast- Run the Code-llama model locally. Free to use. You need good resources on your computer.

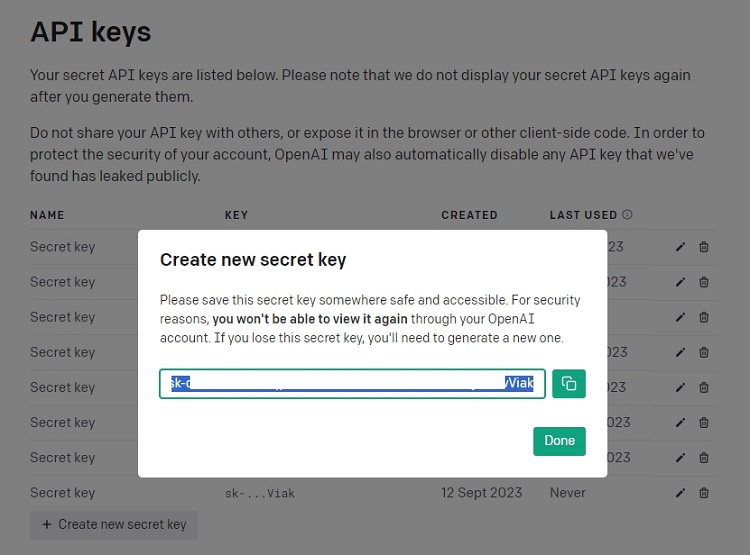

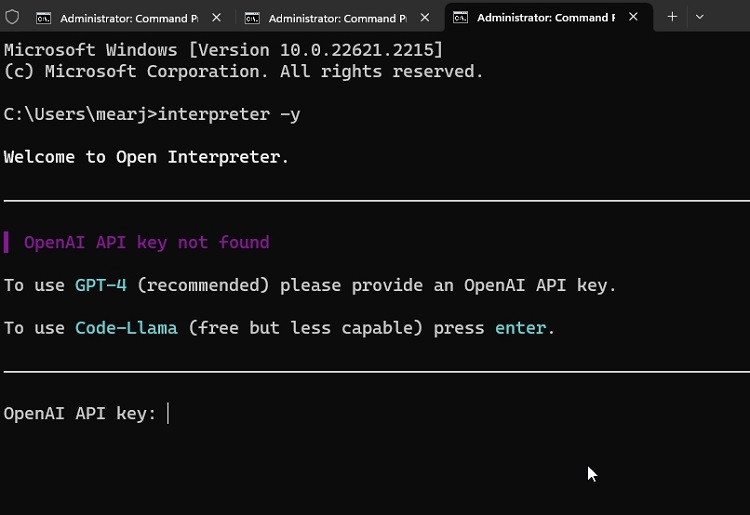

interpreter --local2. I am going with the OpenAI GPT-4 model, but if you don’t have access to its API, you can choose GPT-3.5. Now, go ahead and get an API key from OpenAI’s website. Click on “Create new secret key” and copy the key.

3. Paste the API key in the Terminal and hit Enter. By the way, you can always press “Ctrl + C” to exit from Open Interpreter.

How to Use Open Interpreter on Your PC

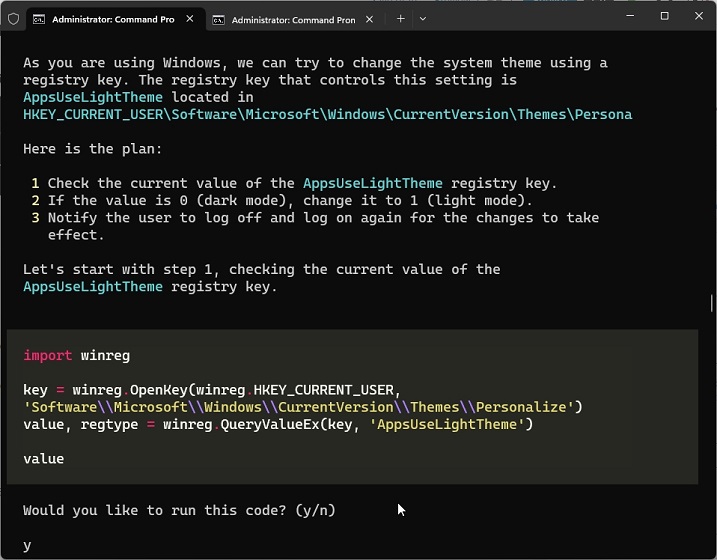

1. To start using Open Interpreter, I asked it to set my system to dark mode and it worked. As I am using Windows, it created a Registry key and changed the theme seamlessly.

2. Next, I asked it to create a simple web-based Timer app. And it did create an app in no time. This can very well turn out to be a great way to earn money using the power of AI.

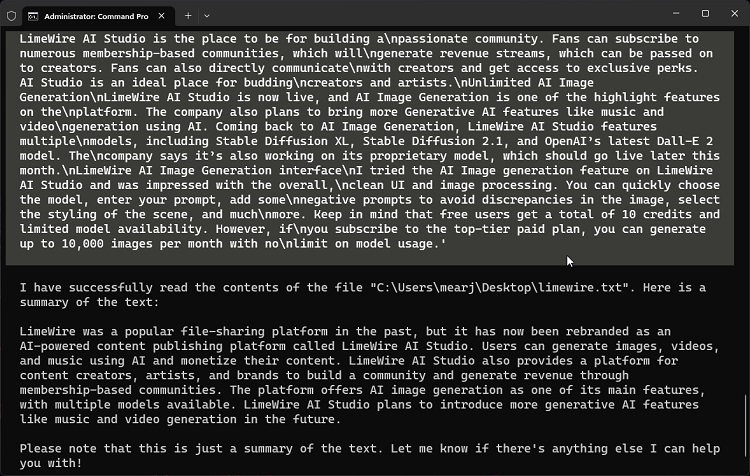

3. Next, I asked the Open Interpreter to summarize a local text document and it worked well.

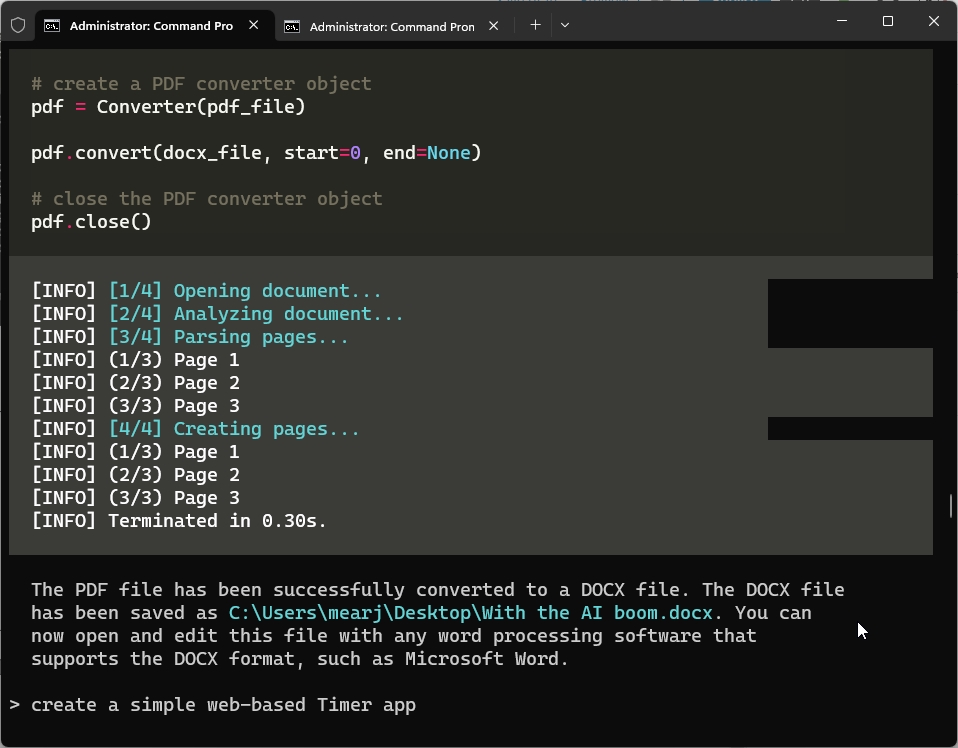

4. I also asked it to convert a PDF file to DOCX and worked flawlessly.

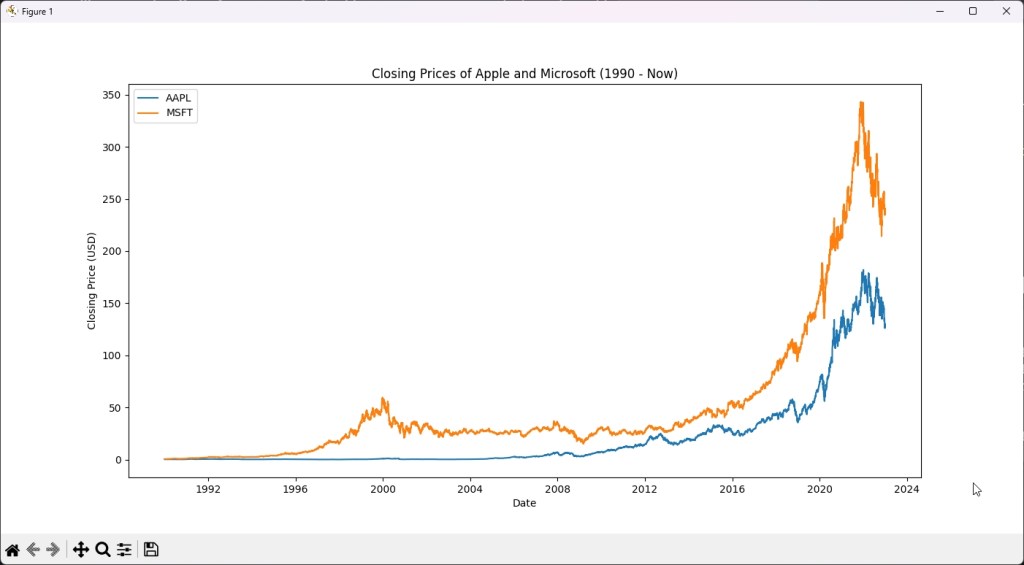

5. Next, it quickly pulled data from the internet and showed the stock price of Apple and Microsoft in a visual chart. You can try many such use cases with Open Interpreter, which are pretty interesting.

Nifty Tips to Use Open Interpreter Locally

1. Open Interpreter always asks for your permission before running the code. After a point, it can get annoying. If you want to avoid it, you can start Open Interpreter in the below fashion.

interpreter -y

2. Next, you can permanently set the OpenAI API key in the command-line interface in the below fashion. This will save you a lot of time. Replace “your_api_key” with the actual key.

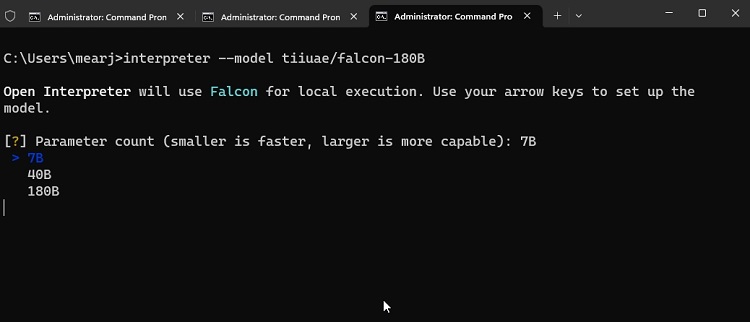

3. If you want to locally use a different model, you can define it like this. The model must be hosted on Hugging Face and the repo ID should be mentioned just like below. You can find the best open-source AI models from our linked article.

interpreter --model tiiuae/falcon-180B

In case, you find the project too complex or buggy, check out our list of the best AI coding tools. These tools allow you to get the best coding experience, be it your favorite IDE or a simple code editor.

The ROG Ally is unquestionably the biggest competitor to Valve’s Steam Deck. We have already spent a good chunk of time with the Steam Deck but recently got a chance to test the ROG Ally to find out if this […]

One Piece is the best-selling manga of all time, and it’s one of the greatest shonen anime in history. So when the announcement of Netflix’s live-action adaptation broke, every anime fan was skeptical due to the looming curse of botched […]

Deck 13 is one of my guilty pleasure game developers and publisher. So when their next title Atlas Fallen was announced, it immediately grabbed my interest even though I have a mixed relationship with The Surge. While I am critical […]